Here I provide some background material and references about my research interests.

With the discovery of the Higgs boson at the LHC, awarded with the Nobel prize in 2013, particle physics has entered a new era. The Higgs boson, responsible for giving their mass to all known particles, is the last missing element of the extremely successful Standard Model (SM), but at the same time its discovery raises a number of new fascinating questions that need to be addressed. The challenge for particle physics in the coming years is to understand in detail the properties of this new particle, the first ever fundamental scalar: any deviation with respect to the SM predictions would be a smoking gun for New Physics beyond the SM (BSM). In addition, the LHC will continue the exploration of the high energy frontier with the search for exotic heavy particles, new forces or additional space-time dimensions. The LHC program has also profound implications for exciting open problems in astronomy and cosmology, for example, many scenarios predict the production of dark matter particles at the LHC.

For some background information about particle physics and LHC phenomenology, you can watch here my lecture at the Oxford’s Saturday Mornings of Theoretical Physics.

Parton Distribution Functions

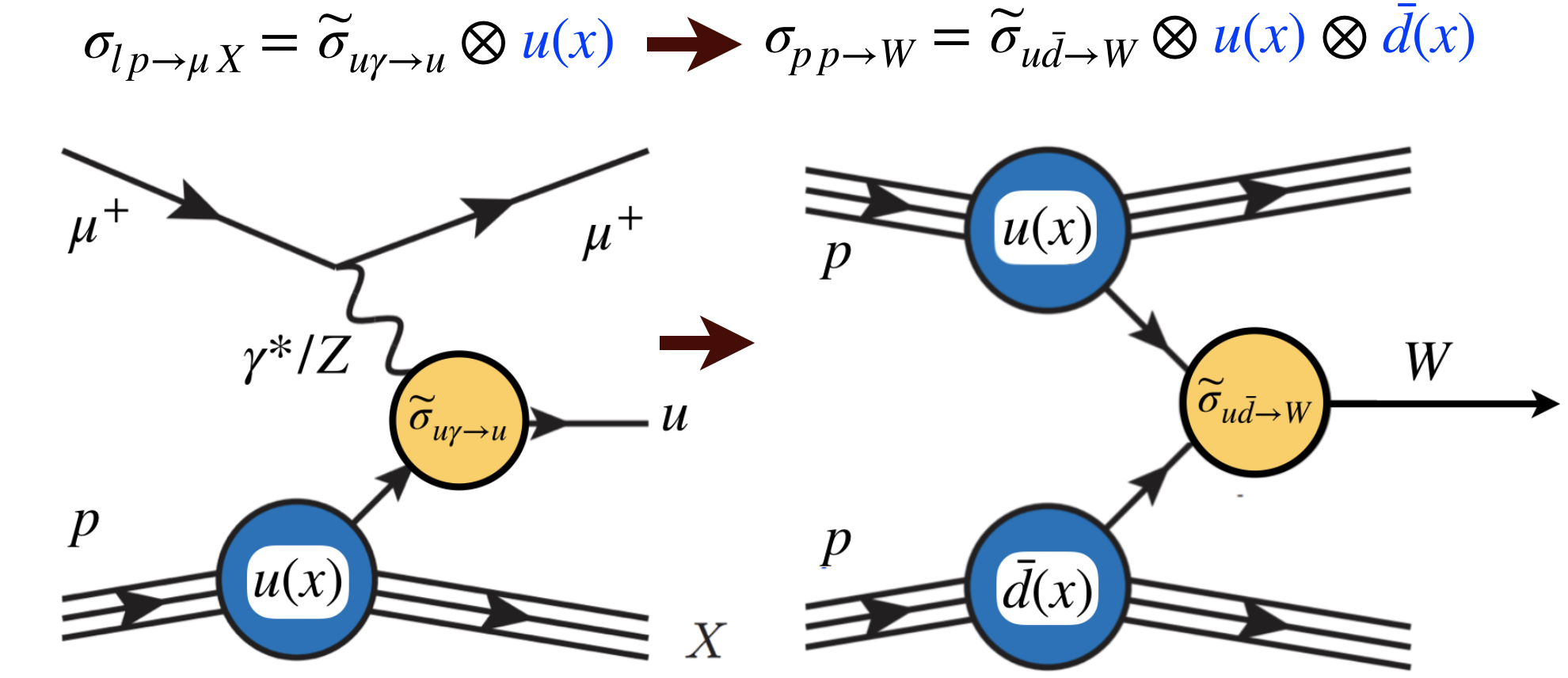

It is therefore of utmost importance to provide accurate theoretical predictions for the most relevant processes at the LHC, including Higgs production, as well as for a variety of New Physics scenarios. Crucial ingredients of these predictions are the Parton Distribution Functions (PDFs) of the proton, which encode the dynamics determining how the energy of the protons that collide at the LHC is split among its fundamental constituents, the quarks and gluons. Being intrinsically non-perturbative, PDFs cannot be computed from first principles, and thus need to be extracted from experimental data, in the context of the so-called global QCD analysis framework.

Our limited knowledge of parton distributions degrades the scientific output of many important LHC analyses. To begin with, PDFs are one of the dominant theory systematics for Higgs production cross-sections, limiting the best precision that can be achieved the extraction of Higgs couplings. PDF uncertainties also affect high-mass particle production at the LHC, ubiquitous in BSM scenarios: for example, the cross-section for the production of a pair of the supersymmetric parters of the gluon, the gluinos, can be affected by up to 100% uncertainties. Moreover, PDF uncertainties are also the largest theory uncertainty in precision SM measurements such as the determination of the W mass at hadron colliders.

I am among the developers of a novel approach to the determination of PDFs based on Artificial Neural Networks (ANN) and Maching Learning techniques, the so-called NNPDF approach. In this framework, PDFs learn the underlying physical law from experimental data without the need of imposing any prior knowledge. The ANN training is performed using Genetic Algorithms, non-deterministic minimization strategies suitable for the solution of complex optimization problems, for instance when a very large number of quasi-equivalent minima are present. Non-linear propagation of uncertainties from the fitted data to the PDFs is achieved by means of Monte Carlo replicas, in contrast to the usual Hessian method whose validity is restricted to the gaussian (linear) regime of PDF uncertainties.

One recent breakthrough in PDF analysis has been the constraints of LHC Run I data in PDF fits. I have studied the inclusion of LHC data into PDF fits, from direct photons to top quark pair production cross-sections and charmed meson differential distributions. LHC data is now a central ingredient of PDF fits, providing vital information on poorly-known PDFs such as the large and small-x gluon or the large-x antiquarks. I have also explored novel ways to exploit the LHC data to provide PDF information, for instance with the proposal of using the ratios of cross-sections between different center of mass energies, which benefits from high PDF sensitivity and reduced experimental and theory systematics due to cancellations in the ratio.

In the figure below I show a snapshot of our current understanding of the proton structure in terms of unpolarized (NNPDF3.0, left plot) and polarized (NNPDFpol1.1, right plot) parton distributuons, from the Particle Data Group review.

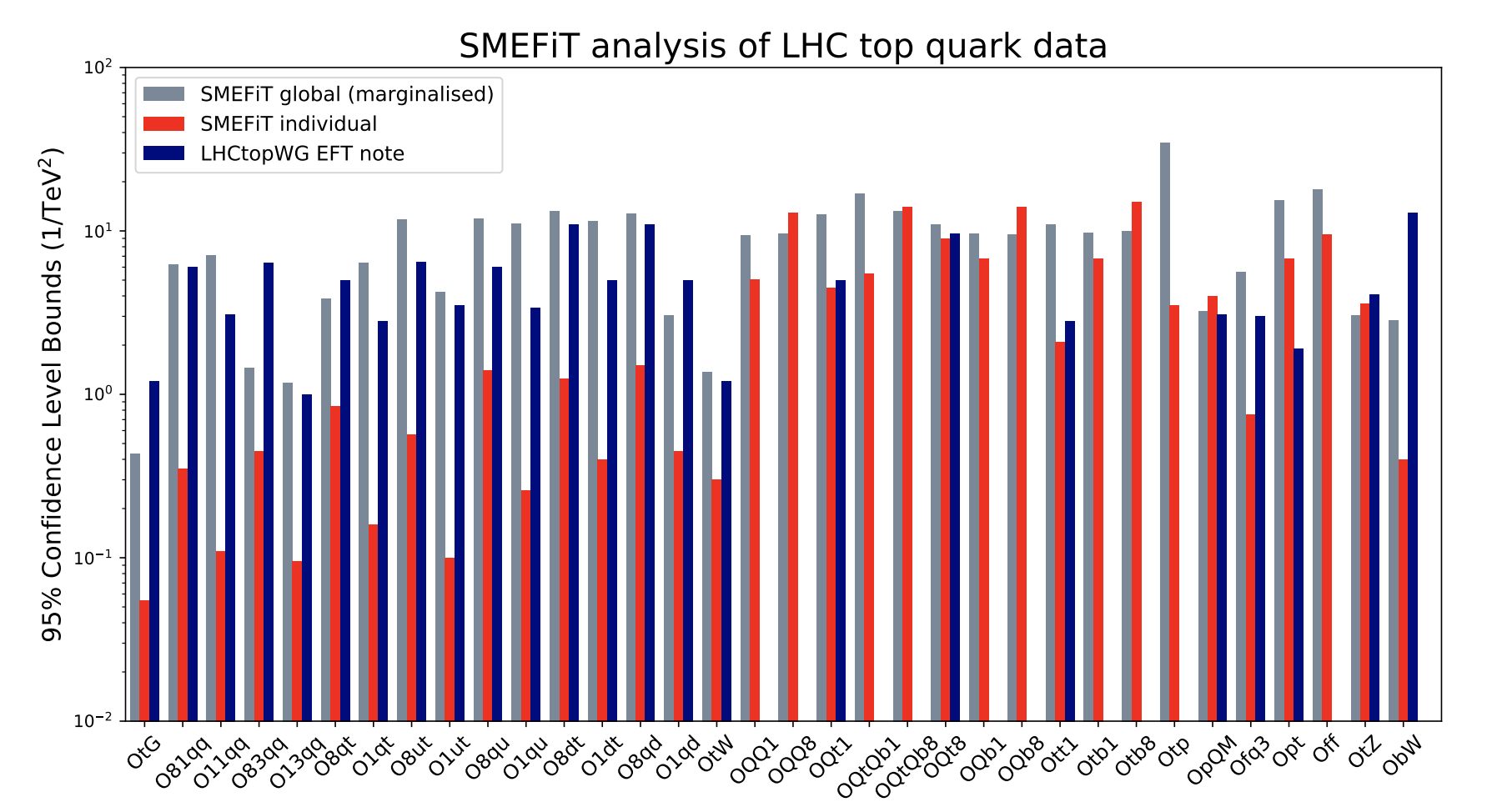

The Standard Model Effective Field Theory

The theoretical interpretation of the LHC measurements requires a robust framework which is model-independent, systematically improvable with higher-order perturbative calculations, and that parametrises the effects of candidate theories beyond the Standard Model (BSM) that reduce to the SM at the LHC energies. These stringent conditions are satisfied by the Standard Model Effective Field Theory (SMEFT), which provides a theoretically elegant language to encode in full generality the modifications of the Higgs properties induced by eventual BSM particles and interactions beyond the LHC reach.

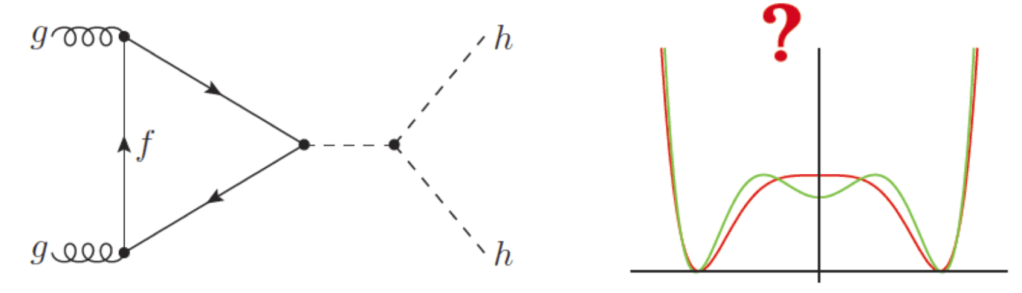

Higgs pair production and jet substructure

Another of my research lines is the exploitation of perturbative QCD calculations for the internal structure of jets in hadronic collisions. Jet substructure methods allow to disentangle QCD jets from jets arising from the decays of heavy resonances, and at the LHC they are crucial to optimize the exploration of the Higgs sector and in searches for BSM physics. In the paradigmatic example, jet substructure methods can turn Higgs associate production in the bbfinal state into a discovery channel despite the overwhelming QCD background. At the LHC, many searches for heavy new physics at the TeV scale rely heavily on jet substructure methods.

One of the cornerstones of the LHC program for the next years will be Higgs pair production, a process for which jet substructure techniques are crucial, in particular when one of the Higgs (or the two) decay into bbpairs. The measurement of the production of Higgs pairs is necessary in order to be able to reconstruct the entire electroweak symmetry breaking potential. While in single Higgs production we can only access the local region around the minimum, in double Higgs production we can reconstruct the full Higgs sector potential. It is difficult to underestimate the importance of this process: not only it would provide the first evidence that Higgs bosons have self-interactions, but also it allows to probe the more ad-hoc sector of the Standard Model, the Higgs potential. Therefore, measuring Higgs pair production could be the key to unveil the origin of the mechanism of electroweak symmetry breaking and open a new window to physics beyond the SM.

My studies have concentrated on the 4b final state, characterised by the higher signal yields, but where the huge QCD background needs to be reduced by several orders of magnitude. For the analysis of the resonant case, where di-Higgs production is mediated by a heavy resonance, as in scenarios with extra dimensions, We developed the scale-invariant resonance tagging method. The basic idea of this technique is the consistent combination of all possible final-state topologies, boosted, resolved and intermediate, into a common optimised analysis. This study allowed to probe a wide range of the BSM parameter space, in particular from low to very high radion and graviton masses.

The same techniques have also been used in a feasibility study which demonstrates how, using the 4b final state, it might be possible to measure the Higgs self-coupling already at Run II, earlier than what previous estimates suggested. This result was achieved thanks to the use of advanced Multivariate Analysis (MVA) techniques. In particular we used Artificial Neural Networks (ANN) to enhance the discrimination of the signal over background events. The MVA is trained on the kinematics of signal and background events (including jet substructure variables), allowing to derive a non-trivial set of highly-correlated cuts that lead to a high signal significance at the High-Luminosity LHC.

Astrophysical implications of collider measurements

I believe that it is always fascinating to cross boundaries between research fields, and I am always keen to explore the implications of high-precision QCD calculations beyond the collider framework. My first excursion into astro-particle physics was a direct determination of the conventional neutrino flux from Super-Kamiokande data using Artificial Neural Networks, applying in a completely new context a methodology that was being developed for proton PDF fits. This calculation has been widely used by the neutrino community.

More recently, we have completed an updated calculation of the prompt neutrino flux at IceCube. The IceCube experiment at the South Pole claimed in 2013 evidence of the first ever detection of astrophysical neutrinos, opening a whole new window to the Universe. In these measurements, it is important to provide careful estimates of all relevant backgrounds, which in this case are dominated by the prompt flux of neutrinos from charm decays produced in cosmic rays collisions in the atmosphere. The key ingredient of this calculation was the exploitation of the constraints from the LHCb measurements of forward charm production to reduce the PDF uncertainties of the poorly-know gluon at very small-x. Our results, together with recent IceCube bounds, indicate that a first direct measurement of this prompt flux is now within reach, which would allow a final vindication of the cosmological nature of the highest energy neutrinos ever measured.

The figure below shows the comparison between our updated predictions for the prompt neutrino flux as a function of the neutrino energy, with theoretical uncertainties (blue band) compared with a previous benchmark calculation (ERS) and with a direct exclusion bound from the analysis of the IceCube data (dotted red line).